Why does application data migration matter?

Digital transformation starts and often stalls – with data migration.

Because behind every “legacy tool” lies the record of your enterprise every requirement, change request, design iteration, and customer decision that shaped how your business runs today. That’s the real intellectual capital. And yet, it’s trapped in systems that are slowing innovation down.

Modernizing these tools isn’t optional anymore. Engineering organizations that upgrade outdated systems see 30–50% faster release cycles, according to McKinsey. Teams with unified, high fidelity data spend 40% less time reconciling issues across tools. The business case is undeniable.

But here’s the paradox: while everyone wants to modernize, most enterprises hesitate for good reason. Migration projects have a reputation for being messy, risky, and disruptive. One wrong move, and years of data lineage, traceability, or compliance history can disappear. Gartner reports that up to 83% of migration projects exceed timelines or lose critical data fidelity. No leader wants to be in that statistic.

The good news? It doesn’t have to be that way. With the right strategy and technology, tool modernization can be seamless preserving relationships, attachments, and traceability while ensuring zero downtime for teams who can’t afford a pause.

In this guide, we’ll unpack why application data migration is the first step toward true digital transformation and how it’s now easier than ever to achieve business continuity with zero disruption.

The true cost of data migration

1. Loss of Business Context - Not Just Data

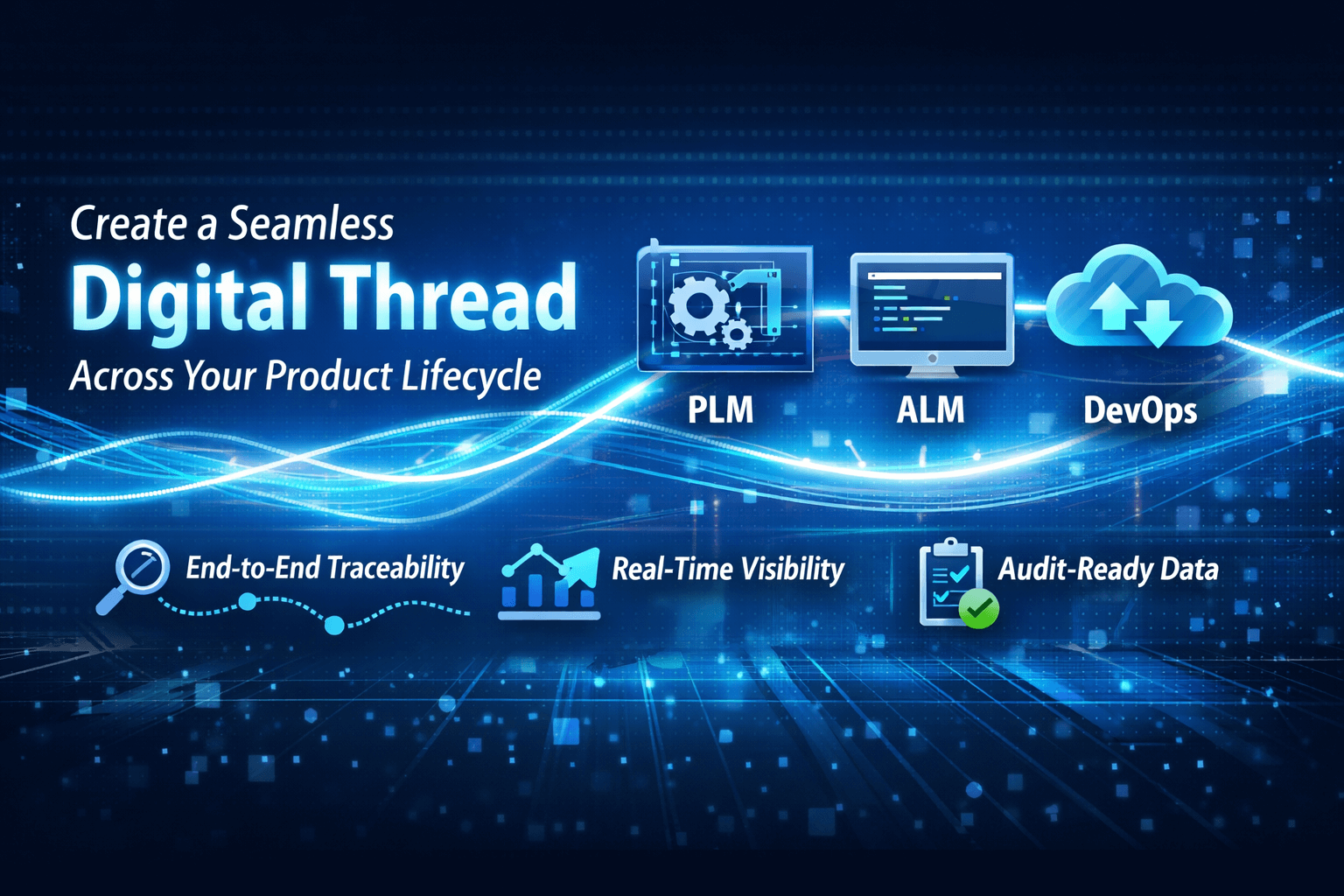

Most migration projects focus on “records moved,” not “relationships preserved.” But in systems like ALM, PLM, or CRM, the value lies in context the links between requirements, test cases, commits, or change requests. When those relationships break, teams lose traceability, history, and decision logic.

Impact: Missing context causes compliance gaps, rework, and audit failures.

Hidden cost: Weeks or months spent manually restoring links or chasing missing information.

2. Misaligned data delays decisions

Traditional migration approaches often require system freezes, limited access, or parallel system runs all of which slow down delivery.

Impact: Engineering or support teams can’t update live work items. Product releases slip.

Did you know? IDC found that 70% of migration projects cause at least one week of productivity loss across impacted teams.

3. Data Fidelity and Validation

Even if data moves, it doesn’t mean it matches. Different tools have different data models, field structures, and reference logic. Mapping them accurately especially for attachments, comments, relationships, or change history is complex and error-prone.

Impact: Teams lose trust in the new system. They revert to spreadsheets or duplicate entries.

Hidden cost: Post-migration cleanup and verification can consume 20–30% of total project effort.

4. Compliance, Audit, and Regulatory Exposure

In regulated industries (medical devices, aerospace, defense, finance), losing traceability isn’t a minor glitch it’s a compliance failure. Auditors demand proof that design changes, approvals, and validations were preserved exactly as in the legacy system.

Impact: Audit failures, re-certifications, or fines.

Example: One missing approval log in a device change record can trigger months of revalidation work.

5. Cultural & Adoption Resistance

Even flawless migration can fail if teams don’t trust the new system. People resist change when they feel their history is lost or their workflow is broken.

Impact: Low adoption, shadow tools, parallel documentation.

Hidden cost: Rebuilding confidence and retraining teams can double project timelines.

A recent migration moved 33,000 records with zero downtime, proving that seamless transitions are possible at any scale. Read Case Study

What does the right migration approach look like?

Enterprises don’t fail at migration because they lack intent they fail because they treat it like a one-time data transfer instead of what it truly is: a live transition that must preserve business continuity end-to-end.

The right approach is defined by five non-negotiable principles: No disruption. No downtime. High fidelity. On-the-fly transformation. And effortless scalability.

Let’s unpack each.

Identifying the migration type

1. Migrating within the same tool ecosystem

Organizations often opt to move from data center or on-premise servers to the cloud in order to lower operating expenses and increase agility, flexibility, and scalability. For instance, an enterprise may want to migrate data from its Azure DevOps Server or older Team Foundation Server (TFS) version, an on-site instance, to a common cloud-based Azure DevOps Services instance to improve collaboration in distributed teams and accelerate time-to-value by moving to cloud.

2. Legacy migration

Many legacy tools are outdated and difficult to maintain. Their siloed architectures do not integrate well with modern DevOps tools or project management tools. Migrating from a legacy ALM or issue tracking tool to a modern best-of-breed tool can lower the operational costs, empower teams with a feature-rich solution and real-time access to data and analytics.

3. Migration to support business re-organization

Business reasons such as restructuring, divestment, mergers and acquisitions or other such organizational activities often compel teams to migrate projects between heterogeneous tools where there is often disparity between the two systems. These types of migrations often require expertise to bridge the model mismatch between source and target systems.

4. Consolidation

Consolidation is required when multiple instances of an application are merged in a single instance for example, three instances of Jira merged into one single instance of Jira. Consolidation can also be implemented across heterogeneous tools, such as merging two instances of X tool into a single instance of Y tool. There could be varied reasons for merging data, including better visibility, traceability, and transparency.

5. Splitting

Depending on the migration type, scale, and complexity of your project, you need to select the most suitable migration approach whether it is manual or automated. Manual or script-based approaches are time consuming, error-prone, and difficult to scale. Here are some of the use cases that support each approach:

Selecting the right data migration strategy

Here’s a quick guide to help you make an informed choice before selecting a migration tool or process.

Developing your migration plan

A successful data migration strategy depends on careful planning, not just technology. These core steps ensure smooth execution, data integrity, and business continuity throughout the migration process.

1. Define the scope

Start with a full assessment of your source systems and data assets. A source target audit helps uncover errors, gaps, or inconsistencies early. Validate whether your existing data aligns with the target system’s structure cleaning, and transformation may be needed before the application data migration begins.

2.Outline the technical approach

Decide whether a one-time, phased, or automated approach fits your needs. Document dependencies, migration sequence, and security protocols. Early stakeholder alignment helps finalize timelines and reduce risk.

3. Set the budget and ROI

Migration costs may seem high initially, but the ROI lies in reduced downtime, better data quality, and improved productivity. A clear data migration plan should show measurable gains like fewer defects, faster releases, and stronger compliance turning one-time investment into long-term value.

4. Choose the right solution

DIY tools may work for small projects, but large-scale migrations need specialized data migration tools and experts to handle complex models and preserve data fidelity. Consider scalability, downtime tolerance, and validation requirements before deciding whether to build or buy.

5. Prepare teams for change

Even the best data migration strategies can fail without user readiness. Plan onboarding, training, and communication early. Give teams time to test and adapt before full cutover to ensure quick adoption and minimal disruption.

6. Execute with control

Execution should be structured and reversible. Map data types, define fallback options, and plan rollback procedures. For zero downtime migration, execute in phases or parallel modes so teams can keep working during validation.

7. Test and validate

Testing proves migration success. Verify integrity, completeness, and accuracy through validation of field mapping, relationships, and history preservation. Continue post-go-live audits to prevent data drifting and ensure ongoing reliability

8. Reconcile and Recover

Even well-planned migrations can hit issues like failed transfers or mismatched records. A solid data migration best practice is to have an error-recovery system with partial rollbacks, retries, and audit trails. This ensures fast resolution without disrupting productivity.

Final thoughts

Every enterprise wants to move faster, but speed only matters when stability follows. Digital transformation doesn’t stall because of new tools; it stalls when critical data can’t move with the teams who rely on it. Effective application data migration keeps business continuity intact. It preserves context, history, and trust, so transformation feels seamless and not disruptive.

Modernization succeeds when nothing valuable is left behind.